The screening of unpleasant content that stoners publish on a forum or any platform is referred to as content moderation. The procedure comprises the use of pre-determined rules to scrutinize the posted content. The content is identified and removed if it does not comply with the specified criteria.

There can be several reasons such as violence, hate words, vulgarity, extremism, nudes, copyright violations, and other elements. The primary purpose of content moderation is to guarantee that the platform or forum is protected, safe to use, and that the brand’s Trust and Safety program is adhered to.

Social media platforms, markets, high-end forums, dating websites or apps, and other identical platforms utilize content moderation. For more information about social media content moderation and the best guide to detecting false dating profiles, make sure to check out https://chekkee.com/the-ultimate-guide-to-spotting-fake-dating-profiles/.

Explaining How Content Moderation Strategies Will Work On Social Media

In order to put it simply, it is your duty to confirm that content introduced by users is ethical, lawful, and compliant with your platform’s rules. However, considering the types of posts uploaded and shared by the users on social media presently, content moderation is not beneficial, but it has become a requirement.

Here are some elements that explain how content moderation strategies work on social media.

1. The fixed regulations and social media platforms’ policy

Using content moderation for social media platforms helps in deciding what content can be and cannot be uploaded. In addition to that, it can remove any inappropriate content or posts that are subject to bullying, harassment, or brand abuse.

2. Specifying who has permission to upload content

It helps to limit who can upload content in order to deter online trolls from getting access to your app or site and stop them from uploading inappropriate stuff.

3. Establish a content strategy

With content moderation, it will be easy to decide what kind of content users can upload on social media platforms. The content has to be related to the brand and adhere to the specified social media strategy and standards.

4. Formulating the content submission procedure

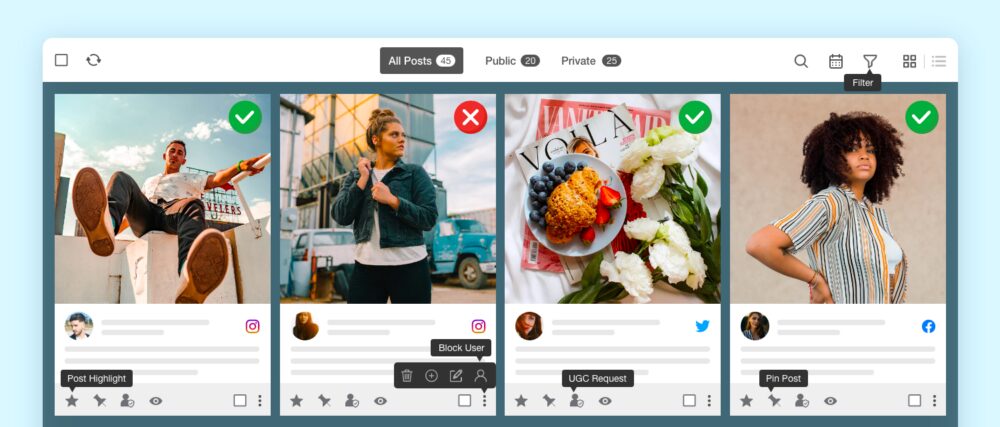

By using the content moderation strategies, it will authorize the content moderators to assess the submitted content before uploading it.

5. Checking content from time to time

The content moderators will help in keeping a close check on what is being uploaded to make sure no kind of inappropriate content is posted on the platform.

Brief Guide To Different Types Of Social Media Moderation

Content moderation is the procedure of assuring that user-uploaded content adheres to specific criteria set by the social media app or platform in order to determine its acceptability for publishing.

User-generated content (UGC) is the post developed and posted initially by users. UGC is classified into different forms, such as images, texts, videos, tweets, reviews, posts, and many more. Here, we will get to know more about different kinds of social media content moderation.

-

Pre-Moderation:

It keeps away the UGC threats from sharing unwanted content on various platforms. The user’s post or drafted content will be evaluated to see whether the content is protected, safe, or ethical for the page’s audience. Despite the fact that it might use some time for the posts to surface on social media apps, this pre-moderation procedure assures that the submitted posts do not harm the brand’s fame. In addition to that, it also deters online bullying and radicalization.

When it comes to social media content moderation, pre-screening the uploaded content from all users on every page and group is common. It implies that exclusive digital organizations with more people following and interacting frequently are more likely to face arguments, share improper content, or deceive others with posted content irrelevant to the page’s primary purposes.

-

Post Moderation:

Post moderation is in contrast to the social media pre-moderation type. It enables users to publish content online in real-time. After that, it will start filtering out the improper posts when they are found and begin deleting the content that infringes the social media community’s regulations and policies.

The fundamental component of post moderation is how content moderators scrutinize online content and integrate it with an automatic system that reports anything that is visually improper for social media viewing. As it does not interrupt the flow of dialogue among users, it is ideal for monitoring social media comments.

-

Reactive Moderation:

The best characteristic of reactive moderation is how the users of social media platforms participate in reporting improper content that is posted online. It is most common in comment columns and discussions, in which improper content, nasty comments, hate speech, cyberbullying, and conflicts are controlled.

By pressing the “report option,” harmful, improper, or vicious posts are given the attention it deserves so that they do not share or hurt the intended audience. As a result, it is assessed as a highly engaged and subjective kind of social media content moderation.

In this type of social media moderation, the opinion and decision of numerous bystanders play a vital role in examining what content the users have posted.

-

User-only Moderation:

The user-only moderation method relies on the users to decide whether or not the UGC is appropriate. When content is reported or flagged as improper several times by numerous users, the content is immediately taken away. The benefit of the user-only moderation method is that it is free of charge. As a result, it allows platforms to save money on content moderation.

Bottom Line

As of today, people are posting whatever they can and sometimes it will be highly inappropriate. In addition to that, young children and minors are using social media apps quite a lot. Due to that, it is best to use content moderation in order to publish content that is safe and appropriate, without hate speech, violence, nudity, violation of rules, and many more.